A concept that inventor and futurist Ray Kurzweil drives home in his books is what he calls the Law of Accelerating Returns. That is, the observation that technology growth (among other things) follows an exponential curve. He shows this for no small number of pages for varying technologies and concepts. Most famous is Moore’s Law, in which Gordon Moore (one of the founders of Intel Corporation) observed that the number of transistors on a die doubled in a fixed amount of time (about every two years). Kurzweil argues that this exponential growth pattern applies to both technological and biological evolution. In other words, that progress grows exponentially in time. It should be clear that this is an observation rather than something derived from fundamental scientific theories.

What makes this backward looking observation particularly interesting is that in spite of our observation of it as generally true over vast periods of time, humans are very linear thinkers and have a difficult time envisioning exponential growth rates forward in time. Kurzweil is a notable exception to that rule. Because of exponential growth, the technological progress we make in the next 50 years will not be the same as what we have realized in the last 50 years. It will be very much larger. Almost unbelievably larger — the equivalent of the progress made in the last ~600 years. This is the nature of exponential growth (and why some people find Kurzweil’s predictions difficult to swallow).

Interestingly, when a survey of scientific literature was done by Derek Price in 1961, an exponential growth in scientific publications was readily observed, but dismissed as unsustainable. This unsustainability in the growth rate was understood to be obvious by Price. The survey was revisited in 2010 (citing the original work), with the exponential growth still being observed 39 years later. So this linear forecasting is a handicap that seems to exist even when we have the data to the contrary staring us in the face.

On the other hand we have biologist Stuart Kauffmann. He introduced the concept of the Adjacent Possible which was made more widely known in Steven Johnson’s excellent book, Where Good Ideas Come From. The Adjacent Possible concept is another backwards-looking observation that describes how biological complexity has progressed through the combining of whatever nature had on hand at the time. At first glimpse this sounds sort of bland and altogether obvious. But it is a hugely powerful statement when you dig a little deeper. This is a way of defining what change is possible. That combining things that already exist is how things of greater complexity are formed. Said slightly differently, what is actual today defines what is possible tomorrow. And what becomes possible will then influence what can become actual. And so on. So while dramatic changes can happen, only certain changes are possible based on what is here now. And thus the set of actual/possible combinations expands in time, increasing the complexity of what’s in the toolbox.

Johnson describes it in this way:

“Four billion years ago, if you were a carbon atom, there were a few hundred molecular configurations you could stumble into. Today that same carbon atom, whose atomic properties haven’t changed one single nanogram, can help build a sperm whale or a giant redwood or an H1N1 virus, along with a near infinite list of other carbon-based life forms that were not part of the adjacent possible of prebiotic earth. Add to that an equally list of human concoctions that rely on carbon—every single object on the planet made of plastic, for instance—and you can see how far the kingdom of the adjacent possible has expanded since those fatty acids self-assembled into the first membrane.” — Steven Johnson, Where Good Ideas Come From

Kauffmann’s complexity theory is really an ingenious observation. Perhaps what is most shocking is that, given how obvious it is in hindsight, no one managed to put it into words before. I should note that Charles Darwin’s contemporaries expressed the same sentiments.

What is next most shocking is that Kauffman’s observation is basically the same as Kurzweil’s. We have to do a little bit of math to show this is true. I promise, it isn’t too painful.

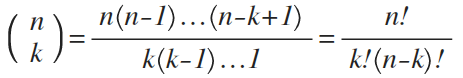

The Adjacent Possible is all about combinations. So first let’s assume we have some set of n number of objects. We want to take k of them at a time and determine how many unique k-sized combinations there are. This is popularly known in mathematics as “n choose k.” In other words, if I have three objects, how many different ways are there to combine them two at a time? That’s what we’re working out. There is a shortcut in math notation that says if we are going to multiply a number by all of the integers less than it, that we can write the number with an exclamation mark. So 3x2x1 would simply be written as 3!, and the exclamation mark is pronounced “factorial” when you read it. This turns out to be very helpful in counting combinations. Our n choose k counting problem can then be written as:

You can try this out for relatively small numbers for n and k and see that this is true.

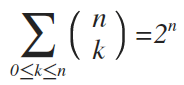

The pertinent question, however, is what are the total number of combinations for all possible values of k. That is, if I have n objects, how many unique ways can I combine them if I take them one at a time, two at a time, three at a time, etc., all the way up to the whole set? To find this out you evaluate the above equation for all values of k from 0 all the way to n and sum them all up. When you do this you find that the answer is 2^n. Or written more mathematically:

So as an example, let us take 3 objects (n=3), let’s call them playing cards, and count all of the possible combinations of these three cards, as shown in the table below. Note that there are exactly 2^3=8 distinct combinations. Here a 1 in the row indicates a card’s inclusion in that combination. We have no cards, all combinations of one card, all combinations of two cards, and then all three cards, for a total of 8 unique combinations.

| Card 3 | Card 2 | Card 1 |

| 0 | 0 | 0 |

| 0 | 0 | 1 |

| 0 | 1 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 0 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

| 1 | 1 | 1 |

You can repeat this for any size set and you’ll find that the total number of unique combinations of any size for a set of size n will always be 2^n. If you are familiar with base 2 math, you might have recognized that already. So for n=3 objects we have the 2^3 (8) combinations that we just saw. And for n=4 we get 2^4 (16) combinations, for n=5 we have 2^5 (32) combinations, and so on.

So in other words, the number of possible combinations grows exponentially with the number of objects in the set. But this exponential growth is exactly what Kurzweil observes in his Law of Accelerating Returns. Kurzweil simply pays attention to how n grows with time, while Kauffman pays attention to the growth of (bio)diversity without being concerned about the time aspect.

Kauffman uses this model to describe the growth in complexity of biological systems. That simple structures first evolved, and that combinations of those simple things made structures that were more complex, and that combinations of these more complex structures went on to create even more complex structures. A simple look at any living thing shows a mind-boggling amount of complexity, but sometimes it is obvious how the component systems evolved. Amino acids lead to proteins. Proteins lead to membranes. Membranes lead to cells. Cells combine and specialize. Entire biological systems develop. Each of these steps relies on components of lower complexity as bits of their construction.

Kurzweil’s observation is one of technological progress. That the limits of ideas are pushed through paradigm after paradigm, but still it is the combination of ideas that enable us to come up with the designs, the processes, and materials that get more transistors on a die year after year. That is to say, semiconductor engineers 30 years ago had no clues how they would get around the challenges they faced in reaching today’s level of sophistication. But adding new ways of thinking about the problems lead to entirely new types of solutions (paradigms) and the growth curve kept its pace.

Linking combinatorial complexity to progress gives us the modern definition of innovation. That innovation is really the exploring and exploiting of the Adjacent Possible. It is easy to look back in time and see the exponential growth of innovation that has brought us to the quality of life we have today. It is much easier to dismiss it continuing on because we are faced with problems that we don’t currently have good ideas about how to solve. What we see from Kurzweil’s and Kauffman’s observations is that the likelihood of coming up with good ideas, better ideas, life-changing ideas, increases exponentially in time, and happily, we have no good reason to expect this behavior to change.